As two famous AI models now, Chat GPT -4O and Claude 3.7 Swant are designed to perform speed, intelligence and real -world tasks.

Although the ChatgPT-4O emphasizes the flow and wide access to conversation, Claude 3.7 Swant is known for its accuracy, work performance and reasoning capabilities.

Both free, I put the two power houses in the test that challenges their reasoning, creativity and ability to handle multiple complex tasks, and the results were seriously surprised. Take a look at how these chat boats compare.

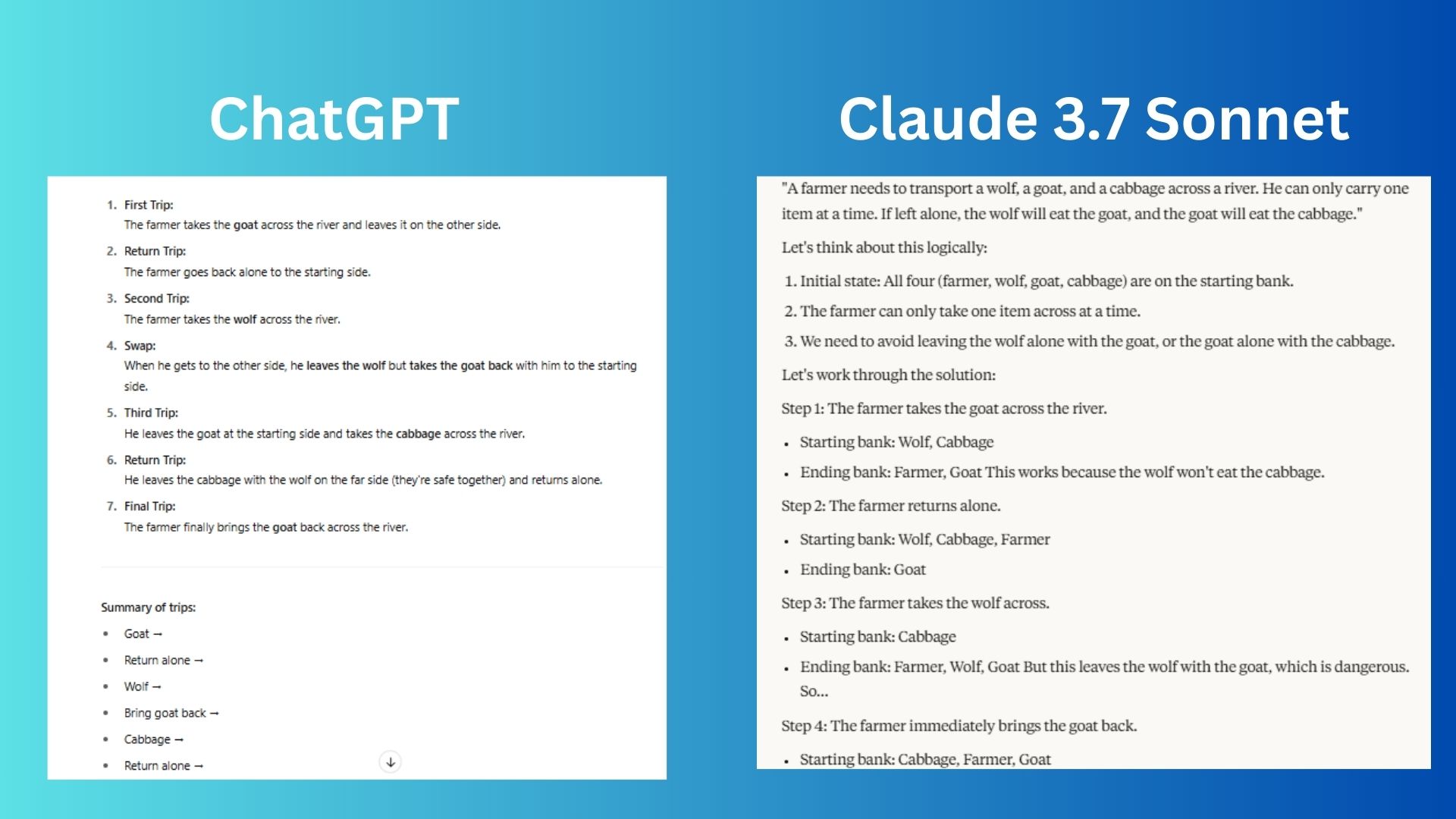

1. The reasoning challenge

Instant: “A farmer needs to take a cabbage across the wolf, a goat and a river. He can take only one thing at a time. If it is left alone, the wolf will eat a goat, and eat goat cabbage. How should he do it? Solve it step by step.”

Chat GPT Given a clear, phased error with the number visits and finally added a comprehensive summary for immediate reference. He used a straightforward sentence that is easy to follow.

The Claude has clearly mentioned why some steps are taken and use the STEP -phase labeling to help facilitate development.

The winner: The Claude won for a slightly better answer, which is fully explained and it provides a logical solution.

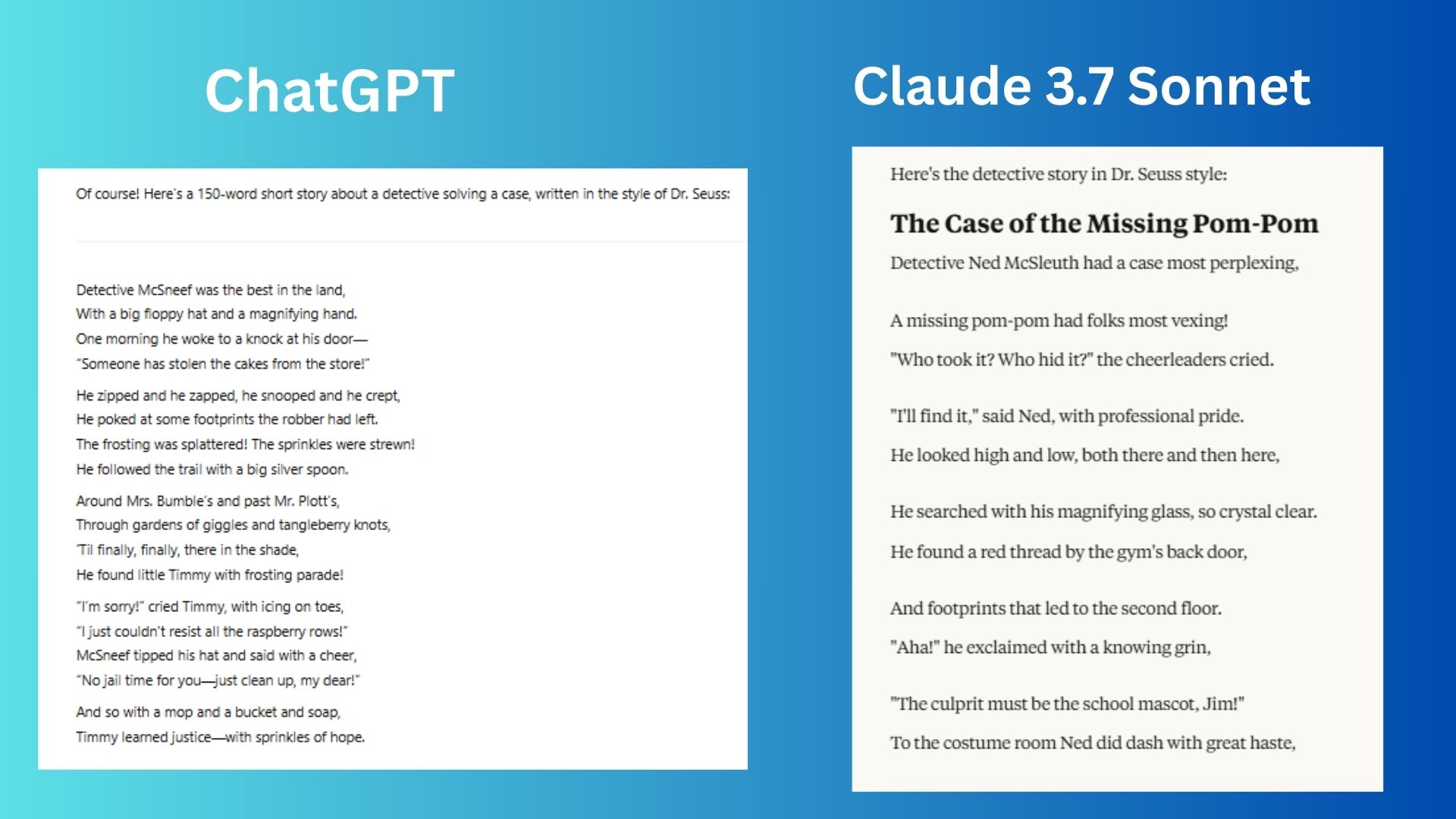

2. The challenge of creativity

Instant: “Write a short story of a 150 word about a detective that solves a case-but write it in the style of Dr. Seas.”

Chat GPT He created a story with strong poetry and rhythm, which felt a lot in the style of Dr. Seas. Sanky wordplay and a clear ethical lesson also fits according to the classic style.

Cloud A high -structural seius meter is delivered in which each line easily flows into the perfect singing song rhythm. He also presented a smart turn in the end with a more spy story.

Winner: The cloud won The strict execution that feels in the style of more polished and seiosis. The version of the Chattagpat is still great, not so good.

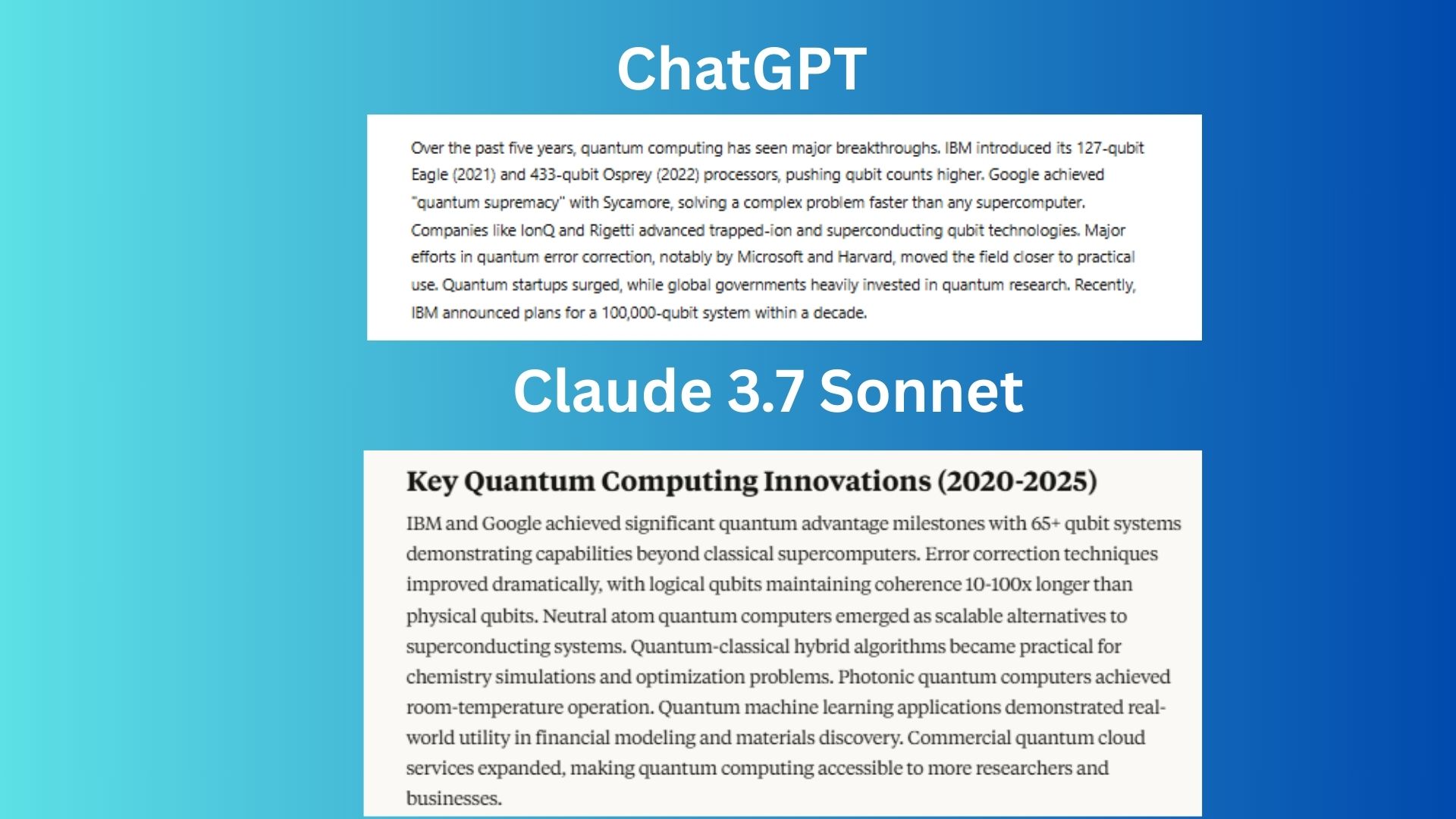

3. Challenge related to facts

Instant: “Summarize key innovations for the past 5 years in quantum computing in 100 words.”

Chat GPT Provide clear milestones and clear timeline markers from key players like IBM, Google, Microsoft, etc. And that includes a waiting statement.

Cloud Acquisition, including comparative measurement of classification advances and clearly mentioned practical applications such as chemistry, finance, content, etc.

Winner: The cloud won Because it was better to balance the technical details with the importance of the real world. It offers another complete picture of error correction progress, commercial applications, and quantum cloud services.

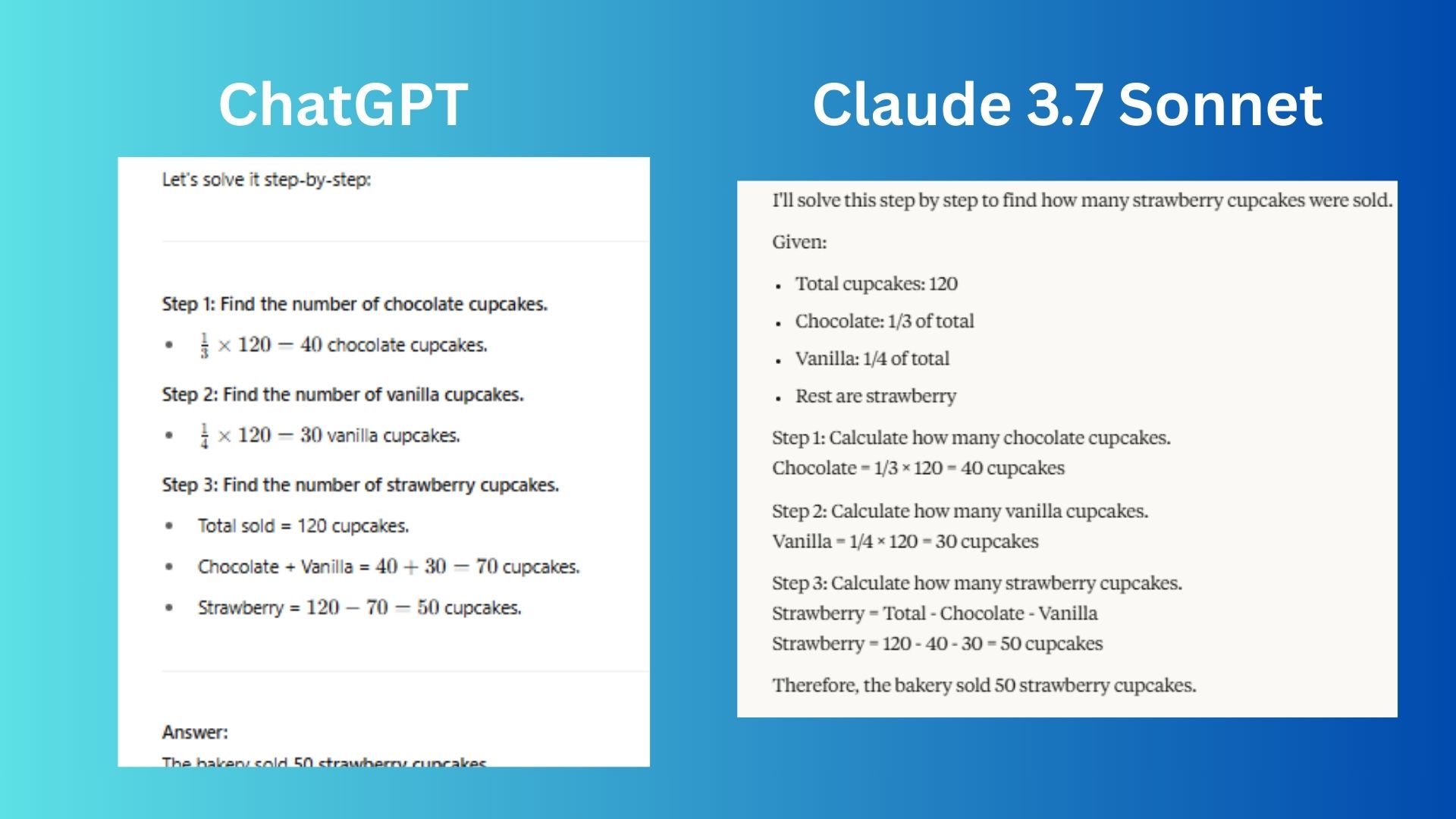

4. The logic challenge

Instant: “A bakery sold 120 cups of cakes a day. There were 1/3 chocolate, 1/4 vanilla, and the rest were strawberries. How much did the strawberry cup cake sold? Show your work.”

Chat GPT The question was correctly answered and clearly showed each step with equality, but the formatting of equality was distributed strangely, making it difficult to read. In other words, Chattagpat made this problem more difficult than he needed.

Cloud Like Chat GPT, using the calculation, the problem also responded accurately, but the steps were clear and Chatboat offered to read better.

The winner: Cleud won for a clear and more polished response that was easy to follow.

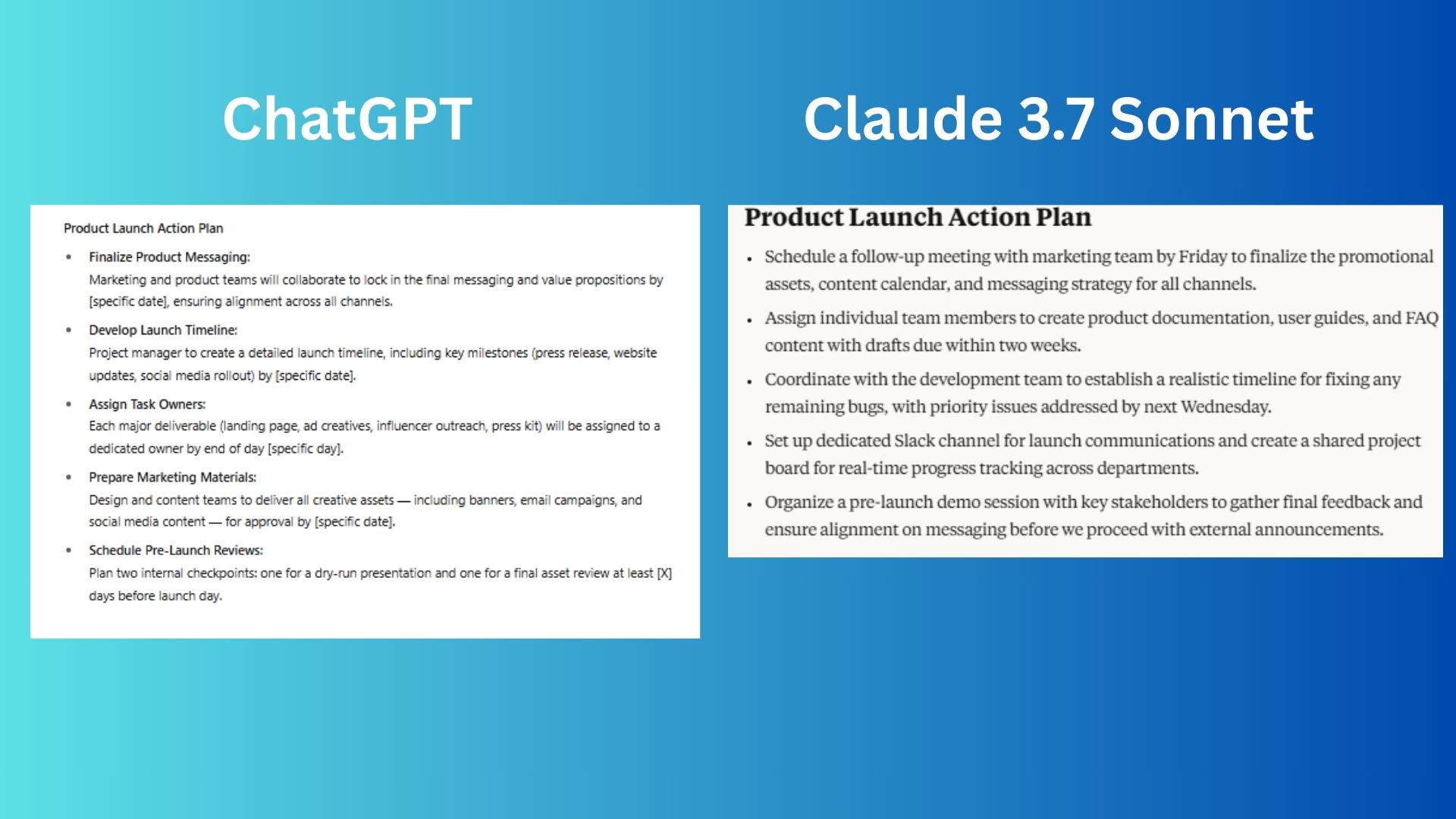

5. Production challenge

Instant: “Imagine that you are just sitting about a product launch plan through a team meeting. Make a 5 -Blat Point Action Plan with a clear next step, based on the general conversation (assigning tasks, setting a deadline, and finalizing marketing strategies).”

Chat GPT This product launch provided a very structural, clear 5 -step error. The chat boot contained a specific deadline and comprehensive coverage.

Cloud Set the realistic deadline with more viable measures. This includes co -operation tools and stakeholders alignment, which is important for a product launch.

Winner: The cloud won For a more viable and team friendly project. The version of the Chattagpat is strong, but the cloud plan was overall.

Overall winning: Claude 3.7 Swant

After putting both models through five tough challenges, who tested reasoning, creativity, facts related knowledge, logic and productivity, cloud 3.7 Sonite emerged as a clear winner, which improved the Chat GPT4O.

Although Chat GPT performed well in the fluent and structural response to the conversation, the cloud presented the more precise, viable and polished responses, especially logical reasoning, the application of the real world, and the performance of the work.

The powers of the cloud focus on detail, clear explanations and practical implementation, which provides a better choice of analytical tasks, planned and creative story story that demands strict formatting.

Chat GPT is a strong all -rounder, especially accessible, wide use of issues, but if you need a sharp accuracy, logical depth, or workplace ready outpots, the choice of cloud can be one.

Final decision? Most professional and for the need to solve the problem, Claude 3.7 Swant won the lead-but both models show impressive progress in AI, which makes them invaluable tools according to your needs.

More from Tom Guide

Back to the laptop