The runway on Friday released a new artificial intelligence (AI) video generation model that can edit the elements in other input videos. Dodged Elf (the first letter of the Hebrew alphabet), video -to -video AI model can perform extensive modifications on the input, including adding, removing and changing items. Adding new angles and next frames; Changing the environment, seasons and days of day; And changing the style. The video model will soon be developed by its enterprise and creative users and platform users, said a New York City -based AI firm.

Runway’s Elf AI Model can edit videos

AI video generation models have made a long journey. We have moved to producing a complete video with the story and even audio by creating a two seconds of a dynamic scene. The runway has been at the forefront of this innovation with its video generation tools, now using production houses such as Netflix, Amazon, and Walt Disney.

Now, the AI company has released a new model called Alif, which can edit input videos. This is a video -to -video model that can manipulate and produce a wide range of elements. In a post on X (previously known as Twitter), the company has called it a video model in a sophisticated SOTA in the context that can change an input video with easy descriptive indicators.

A new way of introducing runway Elf, editing, changing and creating video.

Elif is a sophisticated video model, which has been set up a new Frontier for multi -task visual breed, which has the ability to widespread editing in input video, such as… pic.twitter.com/zgdwyedmqm

– Runway (@Ronway ML) July 25, 2025

In a blog post, the AI firm also exhibited some of the capabilities of the Alif offering when available. The runway has said that this model will first be provided to its enterprise and creative users, and then, in the coming weeks, it will be widely released to all its users. However, the phrase is not clear whether free -level users will also have access to the model, or if it will be saved for paid users.

Come on its abilities, Elf can take an input video and create new angles and scenes of the same scenario. Consumers can request reverse low shot, very close -up from the right, or a wide shot of the entire stage. It is also capable of using the input video as a reference and producing the next frame of indicators.

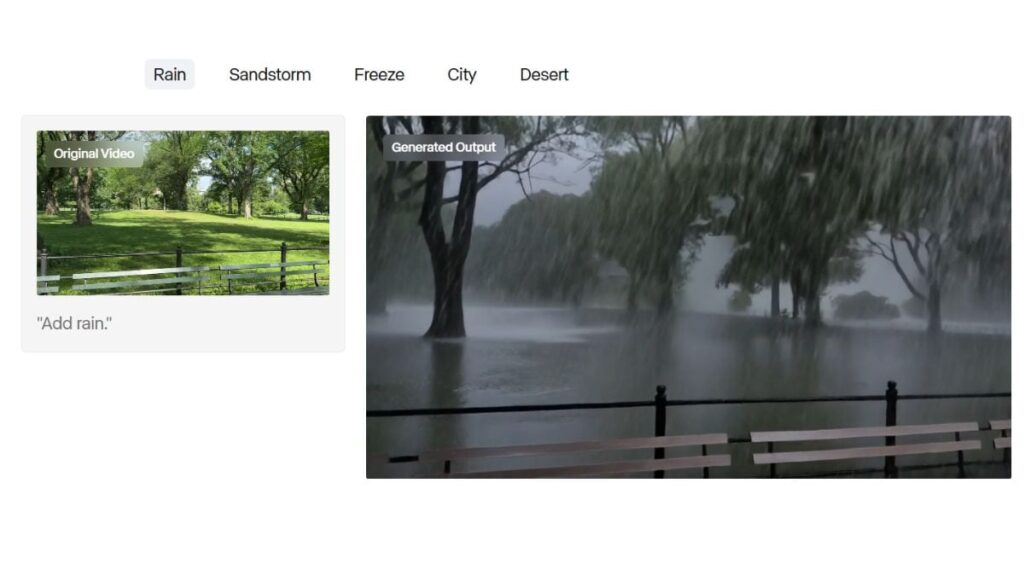

One of the most impressive abilities of the AI video model is to change the environment, places, seasons and daytime. This means that consumers can upload a park video in the morning of the sun, and the AI model can look like rain, sandstorms, snowfall effects, or even nighttime. These changes keep all the other elements of the video.

Elf can also add items to the video, remove things like reflection and buildings, completely replace the items and materials, change the appearance of a character, and change the color of the color. In addition, the runway claims that the AI model can also take a particular movement of a video (think a flying drone is a continuation) and apply it to a different sequence.

Currently, the runway has not shared any technical details about the AI model, such as input video supported length, auxiliary aspect ratio, application programming interface (API) charges, etc. When the company officially releases the model, it will likely be shared.