Eleven labs announced the expansion of the language of its latest artificial intelligence (AI) Text to Speech (TTS) model last week. With this extension, the AI model now supports 41 new languages, in which the total count is taken into 70 auxiliary languages. With this expansion, the model is now accessible to 90 % of the global population, said AI startup in New York City. In particular, the company released the eleven V3 (Alpha) model on June 8, and presented it as its “most expressing TTS model”.

Eleven V3 now supports 70 languages

In a post on X (previously known as Twitter), the official handle of the eleven labs announced that his latest AI model, eleven V3, now supports an additional 41 languages. With this update, the model can produce audio from text scripts in a total of 70 languages. Some newly added languages include Arabic, Assam, Bengali, Bulgaria, Catalan, Gujarati, Latin, Malay, Malayalam, Marathi, Nepali, Swahli, Tamil and Telugu.

The company suggested that people wishing to produce text in any of the new languages should record the Voice Clone (IVC) quickly when choosing the language. In addition, eleven labs are also adding sound library voices for new languages in the coming weeks.

Eleven V3 is the successor of multi -linguistic V2 and V2.5 TTS models. The latest AI model supports in -line audio tags such as whispers, passionate, ah, and more. Adding audio tags enables the model to add emotional nuances, non -verbal indicators, and dramatic delivery to the audio breed.

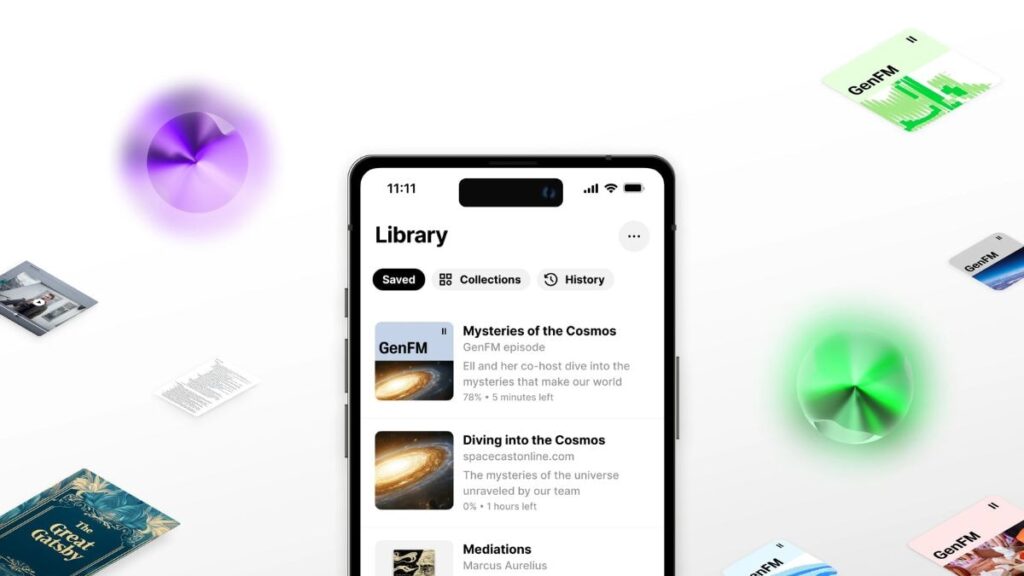

It also supports the interaction of multi -speakers with interference, natural packing, and over -liping dialogues. In addition, the company says that the model handles elements such as tension, cadres and context awareness. Eleven V3 is available through the company’s website and mobile apps. It is currently not available as a application programming interface (API).

In April, eleven labs introduced a new enterprise -based agent feature dubbed agent transfer. A part of the company discussing AI, it allows two AI agents to discuss and share the conversation. The feature creates a system where an AI agent can hand over the conversation, along with the conversation, to another, more special agent.