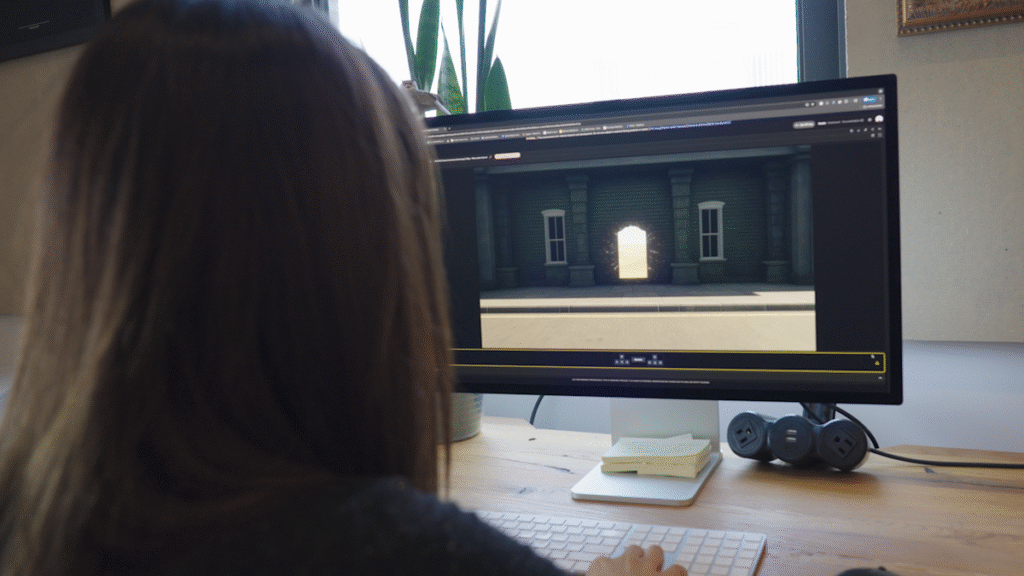

In early December, Google Deep Mind released Jenny 2. The AI Systems’ Geneal Family is the one known as the global model. They are eligible to create photos as a user – either a human or more likely, an automatic AI agent – moves into the world that moves into the world. The action in the model may look like a video game, but Deep Mind has always been in position to train Jenny 2 to train another AI system so that it is designed to meet. With its new Jenny 3 model, which the lab announced on Tuesday, Deep Mind believes that it has developed even better system for training AI agents.

At first glance, the jump between Jenny 2 and 3 is not as dramatic as the model made last year. With Jenny 2, the Deep Mind system was able to produce 3D worlds, and the user or AI agent formed parts of the environment even after leaving it to find other parts of the scene created. Environmental consistency was often the weakness of global models. For example, Dacart’s oasis system had difficulty reminding his sequence Mine Craft The level will produce it.

In comparison, the increase presented by Jenny 3 may seem more minor, but in a press briefing to Google before today’s official announcement, Deep Mind’s Research Director Schloomi Frocheter, and Deep Mind’s Research Scientist Jack Parker Holder argued that they were artificially interested in artificial intelligence.

Google Deep Mind

So what does Geni 3 do exactly? To start, it produces footage at 720p instead of 360p like its predecessor. It is also able to maintain a “permanent” simulation for a long time. Jenny 2’s theoretical limit was up to 60 seconds, but in practice this model often started frauding. On the contrary, Deep Mind says that Geni is able to run for several minutes before preparing 3 samples.

This model also has a new potential called Deep Mind “Instant World Events”. Jenny 2 was an interactive inferior because the user or AI agent was able to input the commands of the Movement and the model will respond after a few moments to prepare the next frame. Jenny 3 does this in real time. In addition, it is possible to comply with the text indicator, which instructs Jenny to change the state of its creation world. Showed in a demo -deep mind, the model was asked to enter the deer’s herd in a scene of someone who is skiing below the mountain. Deep Mind says the deer did not move in a very realistic way, but this is the killer feature of Geni 3.

Google Deep Mind

As mentioned earlier, the lab primarily considers the model as a model of training and testing of AI agents. Deep Mind says that Jenny 3 can be used to teach the AI system to deal with “if what”, which is not included in their pre -training. “There is a lot of things to happen before the model is deployed in the real world, but we see it as a way to train models more effectively and enhance their reliability,” for example, a scenario, in which Jenny 3 can be used to educate a car -driven car.

Deep Mind, despite the improvement in Jenny, has acknowledged that a lot of work is yet to be done. For example, the model cannot create real -world locations with perfect accuracy, and it struggles with text rendering. In addition, Jenny is really useful, Deep Mind believes that the model needs to be able to maintain an artificial world for hours, not minutes but for hours. Nevertheless, the lab feels that the Genealia is ready to influence a real world.

“We are already in a place where you will not use (Jinnie) as your sole training environment, but you can definitely find things you don’t want to do because they work unsafe in some settings, even if they are not perfect, it is still good to know.” “You can already see where it is going. As the model gets better it will be useful.”

For this time, Geni 3 is not available for ordinary people. However, Deep Mind says he is working to make the model available for additional testers.